Most AI initiatives don't fail because the technology breaks. They fail because people aren't ready to change how they work.

According to Prosci's research on AI adoption across the enterprise, 43% of respondents attributed AI adoption failure to insufficient executive sponsorship. Not bad data. Not wrong models. A lack of leadership alignment with the people expected to use them.

MIT's NANDA report puts a finer point on it: roughly 95% of enterprise AI pilots fail to deliver measurable P&L impact. Not because the models don't work. Because organizations treat AI like a tool they hand to their teams instead of the behavioral shift it truly is. As HatchWorks AI puts it in their enterprise AI workshops: it's like putting a treadmill in every home and expecting to cure heart disease. The equipment isn't the problem. The habits are.

If you're leading an AI rollout right now and feeling the friction (teams dragging their feet, pilots stalling, executives asking for adoption numbers you can't produce) that tension is worth paying attention to. Resistance isn't a defect in your workforce. It's a signal that something structural needs to change before the tools will stick.

This article walks through a practical framework for change management for AI adoption. It's built on research from Prosci, McKinsey, and MIT, and grounded in what HatchWorks AI has learned deploying Generative-Driven Development™ (GenDD) with enterprise engineering teams.

Even as an AI company, HatchWorks has been candid that they struggled to fully adopt AI throughout their own organization before building a structured methodology around it. If your team is skeptical, stretched, or stuck, this is where to start. And if you want to understand the deeper emotional dynamics at play, AI anxiety in the workplace is worth reading alongside this piece.

What Is AI Change Management and Why Does It Matter Now?

AI change management is the structured process of helping people, teams, and organizations move from how they work today to how they'll work alongside artificial intelligence. It covers everything from leadership alignment and workflow redesign to training, governance, and ongoing measurement.

That definition sounds similar to traditional change management. It isn't.

A standard tech rollout (new CRM, new ERP, new project management tool) digitizes an existing workflow. People learn a new interface, but the underlying decisions and handoffs stay roughly the same. AI is different. It restructures decision-making itself. It reshapes roles. It asks employees to trust outputs they can't always see, explain, or verify.

IBM frames this well: traditional change management relies on static plans and periodic check-ins. AI requires organizations to sense change early and respond in real time, because the technology itself is evolving faster than any rollout plan can anticipate.

The stakes of getting this wrong are steep. Organizations that skip structured change management end up with what Fast Company's reporting calls "regret spend," budgets poured into licenses and pilots that never scale. Stalled pilots become the norm. Strong AI talent leaves for companies with clearer direction. And worst of all, trust in AI erodes across the org, making the next attempt even harder.

The bottom line: AI adoption is a people problem, not a technology problem. Or as HatchWorks frames it in their AI workshops: AI is as much about building new habits as it is about the technology. Without structured change management, most organizations settle for cosmetic adoption. Tools deployed, logins tracked, but no actual change in how work gets done.

Why Your Team Resists Adopting AI (And Why That's Normal)

When your team pushes back on AI, the instinct is to treat it as an obstacle. Reframe it. Resistance is your team surfacing risks and blind spots that early adopters often miss. The organizations that engage skeptics early, rather than bulldozing past them, build more durable, trustworthy AI programs.

McKinsey's research on AI adoption supports this directly: inviting non-adopters into the process early surfaces challenges that enthusiastic early users overlook. That skepticism, when channeled constructively, often leads to better deployment decisions.

But "resistance" is too vague a label to act on. In HatchWorks' experience running AI workshops across industries, team pushback tends to fall into six distinct profiles, each requiring a different response.

Job-security jitters. The foundational fear that AI will replace people. This is widespread, especially outside of leadership circles, and it's the one that goes unspoken the longest.

Overwhelmed non-starters. Paralyzed by choice and noise. These are the people most in need of guided onboarding and clear, specific use cases rather than a broad "go explore AI" mandate.

Trust skeptics. Concerned about "black box" decisions. This group blocks actual usage unless you can demonstrate transparency around how AI reaches its outputs.

Mis-incentivized performers. Their KPIs still reward the old way of working. Without fixing this, even willing adopters have no structural reason to change.

Change-fatigue veterans. Jaded by past failed transformations. They've seen "the next big thing" come and go, and they can derail momentum unless you can differentiate AI as something that delivers tangible, personal value.

Data guardians. AI adoption can't happen without data access. Their concerns about security, governance, and data quality are valid and must be addressed during implementation, not after.

Here's where the research maps to these profiles.

Fear and Uncertainty in the AI World

Prosci's research found that nearly 29% of change practitioners report wariness toward AI. The drivers are predictable: job security, role displacement, and a general anxiety around the unknown.

This isn't new. HatchWorks' perspective on this is worth considering. The same emotional pattern played out when the printing press threatened scribes, when automobiles replaced horses, and when strong individual contributors were asked to become managers who trusted others to do the work they used to own. The feeling of letting go of a competency that defined your value is ancient. AI just happens to be the latest trigger.

A February 2026 Harvard Business Review article put it plainly: AI adoption often stalls because employees' anxiety about relevance, identity, and job security drives surface-level usage without real commitment. Leaders who treat this as a psychological and contextual challenge, not just a training gap, see far better results.

Skills Gaps and Lack of AI Capabilities Training

Prosci's data shows that 38% of AI adoption challenges trace back to insufficient training on AI tools. People aren't given the context, the hands-on practice, or the role-specific guidance they need to feel competent.

Generic "intro to AI" workshops don't fix this. A VP of Engineering needs a different onboarding path than a QA lead or a product manager. When training is one-size-fits-all, it signals to employees that leadership hasn't thought deeply about how AI changes their specific work, which reinforces the fear rather than reducing it.

There's also what HatchWorks calls "the blank canvas problem." When you hand someone an AI tool and say "you can ask it anything," the most common response is paralysis. If you can ask anything, what do you ask? Teams that lack clear use cases and structured starting points end up with false starts that erode confidence before real adoption even begins.

Governance Confusion and Trust Deficits

Twenty-two percent of Prosci's respondents cite governance and compliance concerns as a barrier. Without clear guardrails, employees don't know what's safe to use, how data is handled, or who's accountable when an AI-generated output goes wrong.

This ambiguity creates paralysis. People default to the old way of doing things, not because they're resistant to change, but because no one has told them where the boundaries are.

Resistance isn't the enemy of AI adoption. Ignoring it is. The organizations that engage skeptics early build more durable, trustworthy AI programs. For a deeper look at the emotional mechanics behind this pushback, read AI anxiety in the workplace.

A Step-by-Step Framework for Change Management for AI Adoption

What follows is the operational core of this post. HatchWorks calls it the CEO's Tactical AI Kickstart Plan: five steps every leader must take to build an AI-enabled organization, plus a sixth step on measurement that separates teams who adopt AI from teams who transform with it.

This isn't a one-time checklist. The strongest AI adoption programs revisit every step as the organization matures.

Step 1: It Starts with the CEO (Top Down)

AI adoption that sticks begins at the top. Not with a memo endorsing AI, but with the CEO and executive team visibly using the tools themselves.

This means three things. First, invest in AI executive training so leadership can think strategically about where AI fits in the business, not just approve budgets for it. Second, use the tools personally. When the CEO models AI usage, it sends a signal that no town hall can replicate. People watch what leaders do, not what they say. Third, consume AI content regularly. Podcasts, YouTube, LinkedIn. You don't need a PhD in machine learning. You need to stay current enough to ask informed questions and recognize real opportunity when your team brings it forward.

Prosci's research reinforces why this matters: over half of managers and employees say leaders don't outline clear success metrics when managing change. That clarity has to come from the top.

Step 2: Create a Cross-Functional AI Task Force

AI touches every department. A single owner (CTO, CDO, or "the AI person") isn't sufficient to drive adoption across all of them. You need a cross-functional task force with real accountability.

Three priorities for this group. Create AI champions across the entire business, people in each department who are excited about AI and willing to experiment publicly. Appoint an AI officer or task force lead with clear accountability, someone who owns the initiative and reports on progress. And document the learnings, both good and bad, and share them across the organization. Transparency about what's working and what isn't builds more trust than polished success stories.

For mid-market companies that can't justify a full-time Chief AI Officer, the fractional model offers a fast path to strategic oversight. A fractional Chief AI Officer provides executive-level AI leadership (strategy, governance, use-case prioritization) without the $300K+ salary commitment. It's particularly effective for organizations that need direction now but aren't ready to build a permanent AI leadership function.

Step 3: Train Your Teams (Bottoms Up)

As many AI projects fail due to the people transformation as they do for any technical reason. Training is where top-down mandate meets bottom-up capability.

Three moves that accelerate this. Invest in one tool to start. Choose one and go rather than getting stuck in analysis paralysis evaluating twelve platforms. Provide AI team training for all, not just engineering. If you don't have in-house expertise, bring in a third party to train. And run a hackathon. Do a couple a year based on a real business challenge, not a toy problem. Hackathons build confidence, surface hidden talent, and generate use cases you wouldn't find in a planning meeting.

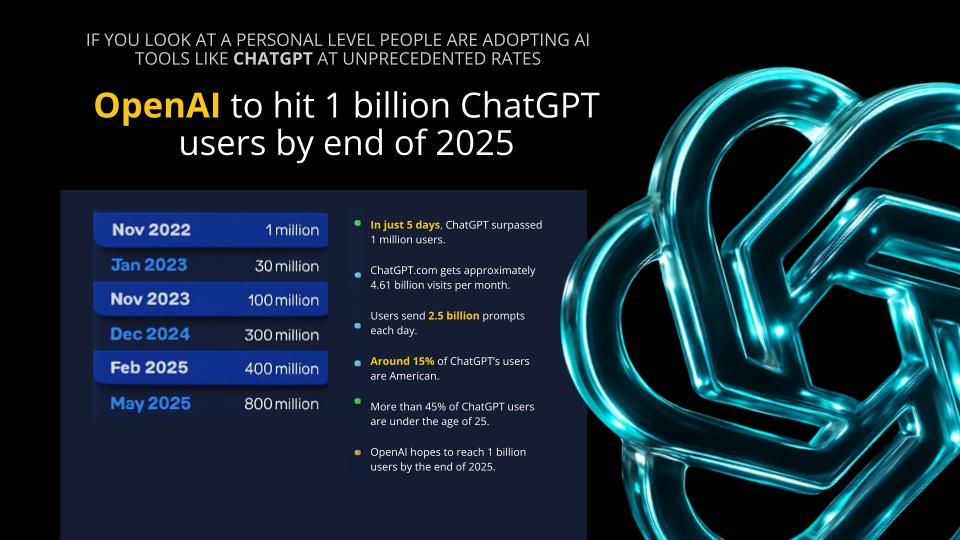

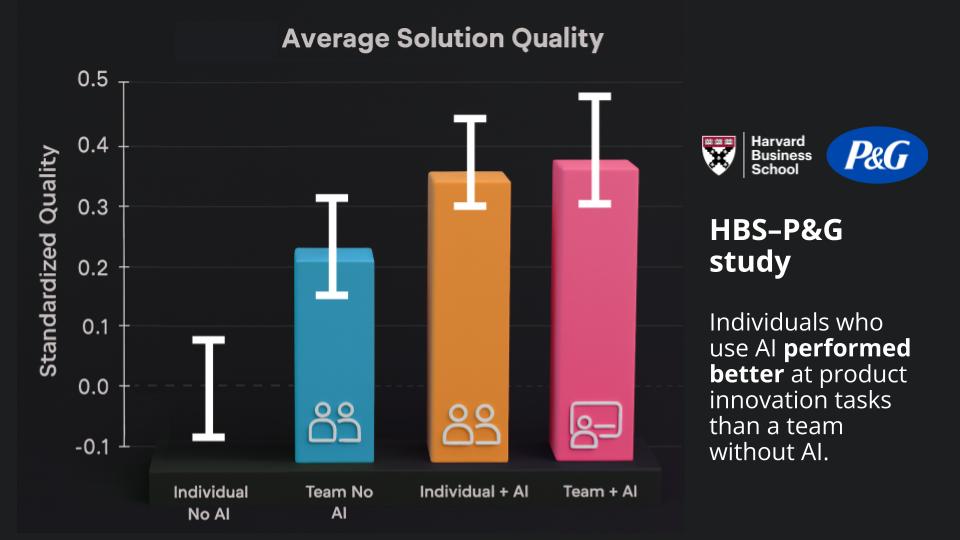

The value of training is clear at the individual level. A Harvard Business School and Procter & Gamble study found that individuals who use AI outperformed entire teams that didn't have access to it.

The challenge is translating that individual value into organizational change, which is why training has to be paired with workflow redesign.

This is the core of what HatchWorks' GenDD methodology addresses. GenDD structurally redesigns the software development lifecycle around AI: explicit division of labor between humans and AI, context baked into every execution loop, and governance built in from day one. It's not a prompt strategy or a tool recommendation. It's an operating model. To see how this works at the team level, read about the GenDD Pod, a three-person unit that delivers the output of an eight-to-twelve-person traditional team by redesigning who does what.

Step 4: Challenge Your Team (Why Not AI?)

Once your teams have access and training, shift the cultural expectation. The question should no longer be "should we use AI for this?" It should be "why is AI not the solution or an option?"

Three ways to embed this mindset. Look at new projects and ask your team if they explored how AI could accelerate delivery. When new resource requests come in, ask whether the job could be automated or augmented with AI before defaulting to a new hire. And hire with AI as a baseline skill going forward. Everyone you bring on should have a skill set that includes AI fluency.

Shopify's leaked internal memo captures where this mindset leads: "Reflexive AI usage is now a baseline expectation. Before asking for more headcount and resources, teams must demonstrate why they cannot get what they want done using AI." That level of cultural integration doesn't happen on day one. It's the result of sustained reinforcement where each win builds the case for the next.

Step 5: Take the First Action (Create a Roadmap)

With leadership aligned, a task force in place, teams trained, and the right cultural expectations set, it's time to build a roadmap so you have a plan, not just enthusiasm.

Three priorities here. Identify use cases. If you don't have in-house experts, hire a third party to facilitate the session. A structured approach to identifying AI use cases can help you prioritize by business impact and implementation feasibility rather than by who shouts loudest in the planning meeting. Prioritize by ROI. Bubble up the highest-return use cases to get a quick win that builds organizational momentum. And revisit quarterly. Look at your use cases on a regular cadence to see if priorities or ROI projections have shifted, because they will.

Don't underestimate the weight of what HatchWorks calls "existing technical, process, and mental debt." Your teams carry habits, assumptions, and workflows shaped by years of non-AI operations. "This is how we've always done it" is the most common and most invisible barrier to adoption. A good roadmap accounts for that debt, not just the technical implementation.

Step 6: Measure What Actually Changed

License counts and login rates don't tell you whether AI adoption is working. They tell you whether people opened the tool, not whether it changed how they work.

Track what matters: cycle time, voluntary adoption (people using the tool without being told to), error rate, throughput, and productivity gains. Build a feedback loop that captures what's working, what's stalling, and what needs to be redesigned for the next phase.

The framework is cyclical, not linear. Each phase feeds the next. The strongest AI adoption programs treat this as a continuous operating discipline, not a project with a finish line.

How AI Transformation Changes Roles, Not Just Tools

One of the most misunderstood aspects of AI adoption is what it does to jobs. AI doesn't eliminate roles. It rearranges the tasks inside them. Some tasks get absorbed by AI, freeing people for higher-value strategic work. Other tasks emerge that didn't exist before: prompt design, output validation, workflow orchestration, and AI governance.

The shift HatchWorks describes is from doers to orchestrators. Today's work is manual, synchronous, unintegrated, and one-to-one between a human and a tool. The future of work with AI is automated, asynchronous, fully integrated, and many-to-many, where humans orchestrate networks of AI agents rather than performing each task themselves.

McKinsey's research on the workforce impact of AI found that millennial managers (ages 35-44) report the highest levels of AI expertise at 62%, compared to 50% of Gen Z workers and 22% of baby boomers over 65. That disparity matters for change management. Organizations need role-specific adoption paths, not a single training deck that treats everyone the same.

Redefining the AI Powered Employee

The "AI-native" employee doesn't look like someone using a chatbot to draft emails faster. They look like someone who architects, directs, and validates while AI handles the execution at volume.

HatchWorks' GenDD methodology embodies this shift. One of their software engineers put it simply: "I haven't written a line of code in three months, but I've shipped six months of work." That's not a person being replaced. That's a person whose role has been redesigned around what humans do best (judgment, architecture, quality decisions) and what AI does best (execution, documentation, pattern completion).

The goal is for AI usage to become invisible, like electricity. Nobody wakes up and thinks "today, I'm going to use electricity." It's just embedded in how everything works. AI adoption succeeds when using AI stops being a conscious decision and becomes the default way your team operates.

This reframing matters for change management because it gives employees a concrete picture of what "working with AI" actually looks like day-to-day. Abstract promises about "augmenting human potential" don't drive adoption. Tangible role redesigns do. For more on how this plays out in practice, explore how AI is reshaping project management.

AI Implementation Mistakes That Stall Business Value

Even organizations with good intentions make predictable mistakes during AI rollouts. Here are the three that stall business value most often.

Treating AI Adoption Like a Software Rollout

AI isn't ERP. You can't buy licenses, schedule a training week, send a launch email, and declare victory. ERP digitizes a process. AI changes how decisions are made, who makes them, and what "good output" looks like. Without workflow redesign, adoption stays cosmetic. People log in, dabble, and revert to the old way within weeks.

Ignoring Mid-Level Manager Resistance

Prosci's Best Practices in Change Management research identifies mid-level managers as the most resistant group during organizational change, more resistant than frontline employees. This makes sense: managers are caught between executive mandates pushing for speed and team-level pushback demanding certainty. They absorb pressure from both directions with limited authority to resolve it.

Equip them. Give them role-specific talking points, decision authority over how AI integrates into their team's workflow, and clear escalation paths for concerns. Managers who feel informed and empowered become force multipliers for adoption. Managers who feel blindsided become bottlenecks.

Bolting Governance On After Launch

Governance can't be an afterthought. When guardrails get added after deployment (after someone surfaces a hallucination in a customer-facing output, after a compliance question goes unanswered for weeks) trust takes a hit that's hard to recover from.

Organizations that embed governance from day one build trust faster and scale more confidently. That means defining acceptable use policies, establishing human review requirements, and creating clear accountability for AI outputs before the first user gets access. For a deeper look at where AI outputs go wrong, read about AI hallucination risk assessment and AI model misbehavior.

Building Trust: The Hidden Driver of Successful AI Change

Trust is the most underrated lever in AI adoption. Tools can be excellent, training can be thorough, and leadership can be aligned, but if employees don't trust the outputs, the governance, or the organization's intent, adoption stalls anyway.

Building trust isn't a one-time communication exercise. It's a structural effort that has to be embedded into how AI is deployed and managed.

Transparency Around How Artificial Intelligence Makes Decisions

Employees need to understand how AI is trained, how it's monitored, and how it's used in their specific context. They need to know what happens when an output is wrong. They need to see human oversight in the loop, not just hear about it in a slide deck.

The most effective organizations pair every AI deployment with clear documentation: what the model does, what it doesn't do, where human review is required, and how to escalate when something feels off.

Safe Channels for Feedback and Dissent

People need ways to raise concerns without fear of being labeled as resistant or obstructionist. Some of the most valuable feedback during an AI rollout comes from the people who are most skeptical. They spot failure modes that enthusiasts overlook.

Create structured channels: anonymous feedback forms, regular retrospectives focused on AI tool experience, and direct access to whoever owns the AI governance function. Surfacing issues early prevents them from becoming systemic obstacles downstream.

Build vs. Buy: Choosing the Right AI Adoption Path

Before your change management plan gets too far along, you need to resolve a strategic question that shapes everything downstream: should your organization build custom AI solutions, buy off-the-shelf tools, or partner with a specialized firm?

Each path has different implications for change management. Building in-house gives you maximum control but requires deep internal talent and governance infrastructure. Buying off-the-shelf is faster to deploy but harder to integrate into specific workflows. Partnering with a firm that specializes in AI-native delivery (the approach HatchWorks takes with GenDD) offers a middle path: production-grade methodology without the overhead of building an AI practice from scratch.

The right answer depends on your data maturity, your team's capabilities, and how differentiated the AI use case is to your business. For a structured way to think through this decision, HatchWorks' build vs. buy framework lays out the tradeoffs in detail.

What Successful AI Change Management Looks Like in Practice

Theory is useful. Seeing it applied is better.

HatchWorks has deployed GenDD across enterprise engineering organizations where structured change management, not just technical deployment, drove the results.

In one engagement with Vanco, a payments technology company, HatchWorks trained 180 engineers in GenDD methodology and delivered 41 production deliverables with 18 role-specific playbooks covering the full software development lifecycle. The VP of Software Development at Vanco called it a "transformative year" for their product and technology teams. That outcome wasn't about installing a tool. It was about redesigning how an entire engineering organization works with AI.

In another engagement with Xometry, a manufacturing marketplace, GenDD methodology applied to real feature implementation reclaimed 347 hours, with 87-95% savings on infrastructure, backlog generation, and mock data workflows. A small team using the methodology on actual sprint work, measured against pre-training baselines.

From AI Adoption to AI Transformation: Moving Beyond Pilots

The difference between organizations that get value from AI and those that don't isn't the technology they choose. It's whether they move past the pilot phase.

ISG's 2025 State of Enterprise AI Adoption Report found that only 31% of AI use cases studied had reached full production, double the prior year, but still a minority. The organizations that close this gap treat AI adoption as an ongoing operating discipline: continuous training, continuous workflow redesign, continuous measurement. That's where business value compounds.

How HatchWorks Helps Teams Navigate AI Change

HatchWorks AI doesn't just build AI tools. They help teams adopt AI as an operating model, with methodology, training, and production-grade delivery.

The entry point depends on where your organization is today.

Learn: The GenDD Training Workshop gets your engineering team building with AI in days. Half- or full-day sessions, customized to your team's skill level, using your real codebase. Your developers walk away ready to ship differently.

Lead: A Fractional Chief AI Officer provides executive-level AI strategy, governance, and use-case prioritization without the full-time hire. Designed for mid-market companies that need direction now.

Scale: Generative-Driven Development™ makes AI the default across your entire engineering org. From workflow redesign to governance frameworks to production delivery, GenDD is the structural operating model that turns AI adoption into AI transformation.

If your team is resisting AI, the problem probably isn't your team. It's the process. And the process is fixable.

FAQ: Change Management for AI Adoption

What is the difference between AI implementation and AI adoption?

Implementation is technical: installing tools, integrating systems, and making AI available for use. Adoption is human: embedding AI into daily workflows, shifting mindsets, and building the confidence people need to actually use it. You can implement AI in weeks. Adoption takes sustained, structured change management.

How long does AI change management take?

It depends on scope. A focused pilot with a single team can show measurable results in two to four weeks. Enterprise-wide transformation is ongoing. The best programs treat it as a continuous discipline, not a project with a deadline. The key is to start small, measure real outcomes, and iterate.

What's the biggest reason AI adoption fails?

People, not technology. Prosci's research points to three primary drivers: insufficient executive sponsorship (43%), unclear success metrics (cited by over half of managers), and lack of role-specific training (38%). Fix those three, and you've addressed the majority of what causes AI initiatives to stall.

Do we need a Chief AI Officer to manage AI change?

Not necessarily a full-time one. A fractional CAIO model gives mid-market companies executive-level AI guidance (strategy, governance, cross-functional coordination) at a fraction of the cost of a permanent hire. It's the fastest path to strategic oversight for organizations that need leadership now but aren't ready to build a full AI executive function.