Everyone wants to know how they can leverage Large Language Models (LLMs) to get ahead in their career and to get their business ahead in its industry.

To help, we’ve put together this guide on effective large language model use cases and take a special look at when combining specialized models may be better than using just one.

Understanding Large Language Models (LLMs): Evolution and Efficiency

What Are Large Language Models?

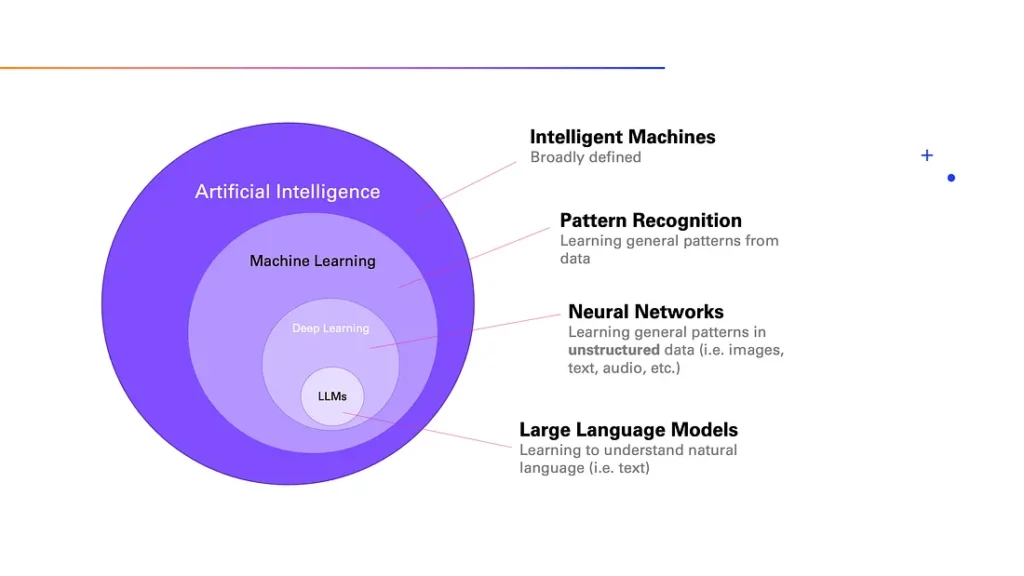

LLMs are advanced AI systems designed to understand and generate human language.

They’re trained on billions of parameters and learn from an array of data sources. An example of this is how ChatGPT has been trained on the content of the internet, where 300 billion words were fed into the system.

Because of this training, LLMs can grasp the nuances of language, from syntax to semantics, and apply this understanding for unique text and content generation. Some even go beyond text generation and can create speech, code, images, video, and more.

LLMs form a pivotal part of Natural Language Processing (NLP). Natural Language Processing is a branch of artificial intelligence that focuses on enabling computers to understand, interpret, and generate human language in a way that is meaningful and useful.

Which is why you’ll see LLMs power applications ranging from chatbots and translation apps to content creation tools.

📚 Want to know how large language models work? Check out our article Large Language Models: Capabilities, Advancements, and Limitations [2024].

How Have LLMs Changed Over Time?

They’ve become capable of more complex tasks

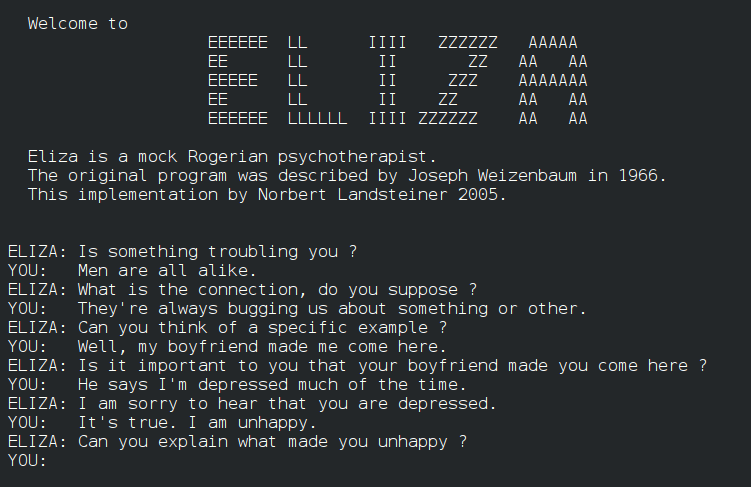

Large Language Models got their start back in the 1960s with the introduction of ELIZA, a rudimentary chatbot acting as a pseudo-therapist. ELIZA and other early LLMs were capable of simple tasks like spell checks and generating pre-programmed answers.

The first chatbot created by Joseph Weizenbaum, simulating a psychotherapist in conversation.

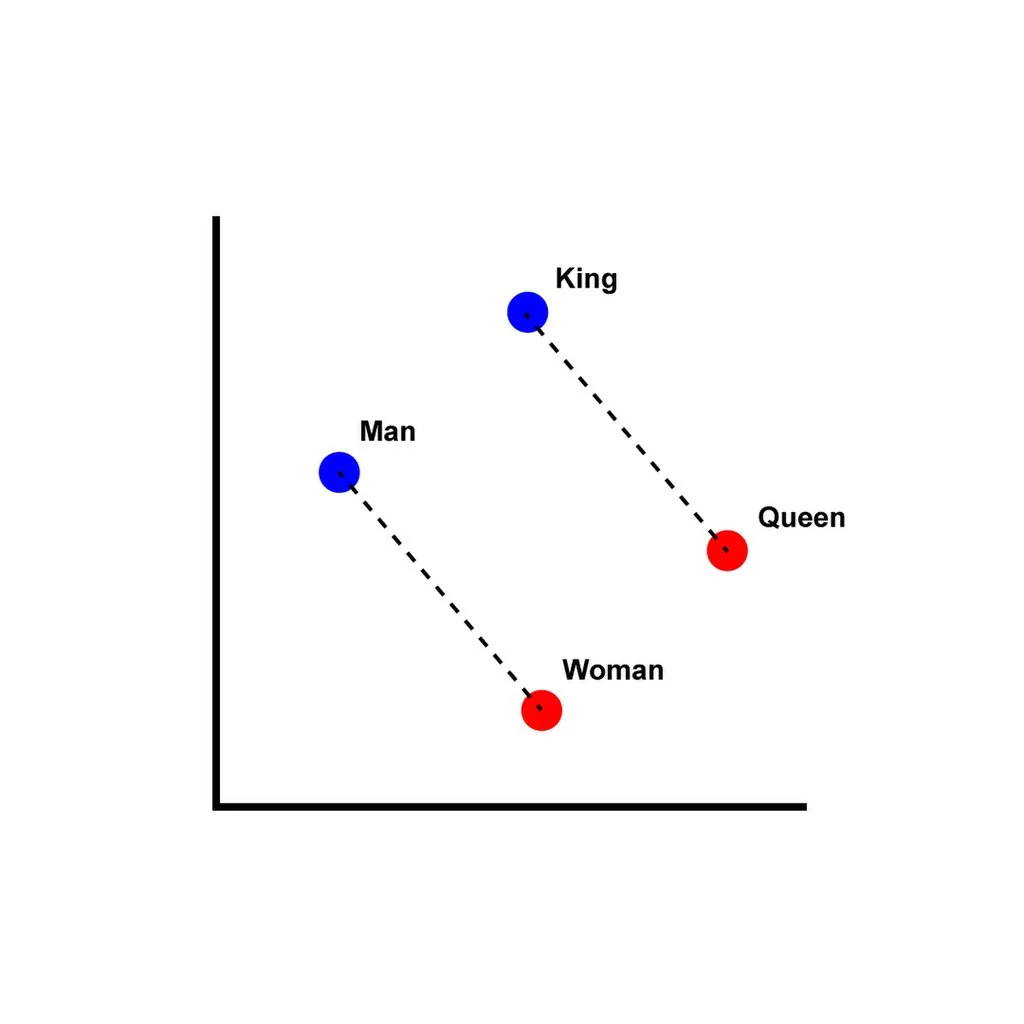

A groundbreaking tool developed by a team led by Tomas Mikolov at Google, introducing efficient methods for learning word embeddings from raw text.

- GPT (Generative Pretrained Transformer): OpenAI introduced GPT, showcasing a powerful model for understanding and generating human-like text.

- BERT (Bidirectional Encoder Representations from Transformers): Developed by Google, BERT significantly advanced the state of the art in natural language understanding tasks.

OpenAI released GPT-3, a model with 175 billion parameters, achieving unprecedented levels of language understanding and generation capabilities.

OpenAI introduced ChatGPT, a conversational agent based on the GPT-3.5 model, designed to provide more engaging and natural dialogue experiences. ChatGPT showcased the potential of GPT models in interactive applications.

OpenAI released GPT-4, an even more powerful and versatile model than its predecessors, with improvements in understanding, reasoning, and generating text across a broader range of contexts and languages.

The launch of Midjourney, along with other models and platforms, reflected the growing diversity and application of AI in creative processes, design, and beyond, indicating a broader trend towards multimodal and specialized AI systems.

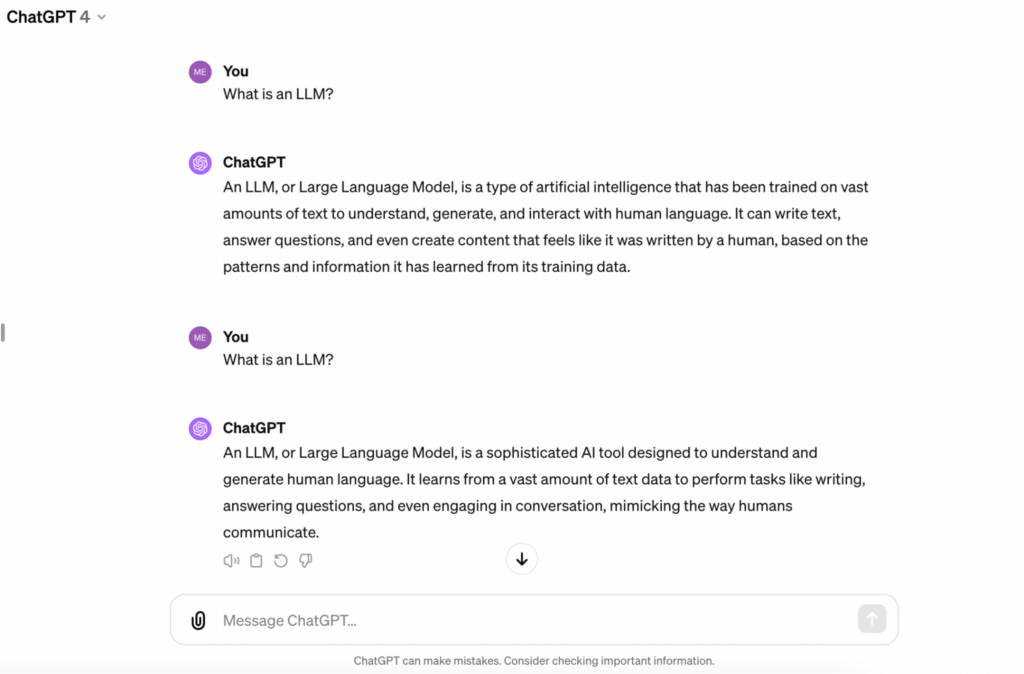

But today, LLMs like GPT (Generative Pre-trained Transformer) are capable of routine tasks such as writing essays, coding, and even creating art.

The content it creates isn’t programmed at all but unique. In fact, if you ask ChatGPT the same question twice, it will present the relevant information differently both times:

They’ve become even larger

LLMs have also gotten even larger. With the introduction of Transformers (a type of architecture an LLM is built on) in 2017, LLMs are not only trained on the billions of parameters we mentioned earlier, they can process more, in a shorter time frame.

For example, a Transformer can look at all of the words in a sentence in context of one another and predict appropriate responses. Whereas, pre-transformers, LLMs could only process one word at a time.

They’ve become more accessible to individuals and organizations

What’s equally impressive as its capabilities and knowledge base is how accessible LLMs have become.

They’ve gone from a technology only researchers and resource-rich organizations would be able to use to one just about anyone can access and use in their day-to-day lives. If you know how to use a computer, you can use an LLM.

There are a few reasons for this increase in accessibility:

There’s been significant growth in the community around AI and machine learning, with more educational resources available than ever before. Online courses, news articles, tutorials, and forums have made it easier for people to learn about LLMs and how to use them, fostering a wider pool of talent in the field.

Are LLMs as Efficient as Everyone Thinks?

We hear all the time that LLMs and Generative AI are a great way for businesses to reduce costs and increase productivity.

And we agree! But when you look below the surface of ‘efficiency’, you’ll find they aren’t all that efficient, especially when it comes to the amount of resources and energy required to run these systems.

As noted by MIT CSAIL postdoc Hongyin Luo, the environmental impact of training these Large Language Models is just as large, with CO2 emissions exceeding those of a car over its lifetime. Add to that the high operational costs required to maintain and run LLMs and you’ll see how unsustainable they are.

Which is why Hongyin Luo, and others, are looking to create more efficient and less resource-intensive models.

Luo shares:

To solve these challenges, we proposed a logical language model that we qualitatively measured as fair, is 500 times smaller than the state-of-the-art models, can be deployed locally, and with no human-annotated training samples for downstream tasks. Our model uses 1/400 the parameters compared with the largest language models, has better performance on some tasks, and significantly saves computation resources.”

Hongyin Luo

So, yes, right now LLMs have an efficiency problem. But it’s one that’s likely to be solved because society’s hunger for Generative AI is only growing as more applications for its use emerge.

The Spectrum of LLM Applications

One of the great things about Large Language Models is how they can be applied and adapted to an array of use cases.

Need help brainstorming the name of your company’s new podcast? An LLM can do that.

Need to analyze a complex set of data points? An LLM has your back.

Need to see if there are bugs in the code you wrote? Yes, an LLM can help there too.

As long as language is involved in some form—be it English, mathematical equations, emojis, sign language, etc.—you can find a way for an LLM to support your task.

Search engines like Google use LLMs to enhance search results while software developers use Generative AI built on LLMs to find bugs in code segments.

The spectrum is large and ever growing as we experiment and develop Large Language Models to serve more use cases.

Common Large Language Model Use Cases in Business

Let’s look at how LLMs can be used in business to expedite and enhance different sectors.

LLMs in Software Development and Data Analysis

Code Generation and Assistance: Tools like GitHub Copilot utilize LLMs to suggest code snippets, complete lines of code, and even generate whole functions based on natural language queries and descriptions, significantly speeding up the development process.

Bug Detection: LLMs can analyze code to identify patterns of errors or potential issues, helping developers quickly find and fix bugs.

Documentation: Automatic generation of documentation for software projects is another area where LLMs excel, making the process more efficient and ensuring consistency across documentation.

Real-life LLM application:

| What: | How: | Outcome: |

|---|---|---|

|

We use various tools to define UX features and flows, generate foundational code, detect bugs, and more.

|

Our method has led to a 30-50% productivity increase for our clients.

|

We have trusted HatchWorks with our most strategic development projects for over five years. Their Nearshore model, combined with their AI capabilities, has been a game-changer for our software development practice.

Taryn Owen

President & CEO, TrueBlue

If you’re interested in leveraging large language models in your software development projects and want to use a trusted Nearshore Software Development provider, get in touch with us here.

LLMs in Science

Research Acceleration: LLMs can process and summarize vast amounts of scientific literature, helping researchers stay up to date with the latest findings and identify research gaps.

Data Analysis: They are adept at analyzing complex datasets, identifying patterns, and making predictions, which can be particularly useful in fields like genomics or climate science.

Hypothesis Generation: By synthesizing existing knowledge, LLMs can propose new hypotheses for scientists to test, opening up new avenues of research.

Real-life LLM application:

| What: | How: | Outcome: |

|---|---|---|

|

They developed a database of descriptions for more than 140,000 crystals and used it to train an adapted LLM called T5. Now they can predict the properties of new materials more accurately and thoroughly than they could with their existing systems.

|

This work can accelerate the speed of technological development such as with batteries and semiconductors.

|

LLMs in Healthcare

Medical Diagnostics: LLMs can support diagnostic processes by analyzing medical literature and patient data to suggest possible diagnoses.

Patient Interaction: They can power virtual health assistants for patient inquiries, providing information and guidance based on symptoms described in natural language.

Clinical Documentation: LLMs can assist healthcare professionals in the creation and management of clinical documentation and relevant data, making record-keeping more efficient and accurate.

Real-life LLM application:

| What: | How: | Outcome: |

|---|---|---|

|

In the UK, the NHS (National Health Service) uses a chatbot called Florence to help assess patient symptoms.

|

Patients can use the text chatbot to get medicine reminders, track their health, and ask questions about symptoms.

|

Faster, personalized support that could help patients get the right medical attention.

|

LLMs in Marketing

Product Description Generation: LLMs can automate the content creation process of detailed, Search Engine Optimized product descriptions for e-commerce platforms, enhancing online visibility and sales.

Customer Insights: Analyzing customer feedback, reviews, customer queries, and interactions on social media platforms to gain valuable insights into customer preferences and trends.

Personalized Communication: LLMs can help tailor marketing messages to individual customers, enhancing the effectiveness of email campaigns and other marketing communications.

Real-life LLM application:

| What: | How: | Outcome: |

|---|---|---|

|

AI handled first-draft brainstorms and SEO recommendations while the human team focused on areas of higher impact.

|

Their marketing team doubled their article output and increased blog traffic by 40%.

|

LLMs in Finance

Fraud Detection: By using textual data, analyzing transaction patterns, and identifying anomalies, LLMs can help detect and prevent fraudulent activities.

Market Analysis: They can process vast amounts of financial data to provide market insights, forecasts, sentiment analysis, and trading recommendations.

Customer Service: Virtual assistants powered by LLMs can handle and improve customer service inquiries, provide account information, and even offer financial advice.

Real-life LLM application:

| What: | How: | Outcome: |

|---|---|---|

|

It leverages past data and standard operating procedures to expedite the processing of claims.

|

The customer experience is improved and the finance team can get through claims faster, freeing them up for other work.

|

Comparing Single vs. Multiple LLM Approaches

When thinking about the ways you can leverage Large Language Models, you need to consider if it’s more effective to use a single LLM or multiple LLMs simultaneously.

A single LLM will rely on one comprehensive model for a range of tasks while multiple LLMs rely on several specialized models. The multi-model approach is meant to achieve a greater level of accuracy for a set of specific tasks. A single LLM won’t be as specialized or as accurate but it will be capable of more tasks.

Both approaches come with their benefits and limitations and which one is most appropriate for you depends on what you want to achieve with LLMs and the resources you have at your disposal.

Below, we’ve built a table that shows these benefits and limitations side by side, as well as key points such as example use cases and when you should choose one approach over another.

| One Large Model | Multiple Models | |

|---|---|---|

|

Benefits

|

|

|

|

Limitations

|

|

|

|

Example Use Case

|

A company integrates a single LLM to power a digital assistant that helps with various tasks, such as drafting emails, generating reports, providing customer support, and answering general queries. This approach is cost-effective, simplifies the technology stack, and ensures consistency in the responses and content generated.

|

A healthcare platform employs multiple LLMs: one for initial patient triage based on symptoms, another for diagnostic assistance using medical histories and clinical guidelines, and a third for treatment recommendations, considering the latest research and drug interactions. Each model is optimized for its specific task, ensuring high accuracy and reliability.

|

|

When to Use One Over the Other

|

Use a Single LLM When:

|

Use Multiple Specialized Models When:

|

Deploying LLMs on Your Own Machine

Companies can use a 3rd party large language model and deploy it within their own machine. Think: a custom chatbot, automatic localization of content to multiple regions on their app or website, code debugging, and more.

We won’t get into it in too much detail here but we have an entire webinar on the why, how, and potential impact of deploying LLMs on your own machine.

You can access it here.

The Future of Large Language Models

Trends and Predictions

If the last few years are anything to go by, we’re in for an exciting future where language models grow in capabilities, find new applications, and become less resource intensive.

We also expect to see an evolution of how LLMs interact with one another. MIT is already experimenting, using LLMs to monitor and police the behaviors of other LLMs.

It’s a trend that will prove useful for companies who need to mitigate risk when it comes to AI hallucinations. After all, it could have saved Air Canada the headache and costs of a court case and prevented their chatbot from introducing a refund policy that doesn’t exist.

We also expect an increased focus on user experience, ethical considerations, and specialization. LLM developers should, and likely will, look to gather more user feedback to work on these three areas.

There’s also a growing need to build human trust in AI’s ability to keep customer data safe especially when it comes to corporate use where sensitive information from company financials to employee data and patient medical data is put into these systems. You can expect development in this area to take place as LLM adoption increases.

Want to be on the cutting edge of LLMs and all things Generative AI?

Join us at HatchWorks as we train businesses just like yours on how to introduce their teams to AI and get the most out of early AI adoption.

And sign up for our newsletter where we share weekly insights on the large language model use cases, trends, market research, and developments of all things AI.

Frequently Asked Questions

1. What are LLMs and how can businesses leverage them?

Large Language Models (LLMs) are advanced artificial intelligence systems that use machine learning and neural networks to understand and generate human-like text. Businesses can leverage large language models for tasks such as content creation, language translation, and automating routine tasks to enhance efficiency and productivity.

2. How can LLMs help in automating routine tasks and improving customer satisfaction?

LLMs can automate routine tasks like answering user queries, providing relevant information, and handling customer feedback through virtual assistants. This not only saves time but also improves customer satisfaction by delivering quick and accurate responses.

3. Why might a business choose multiple specialized models over a single LLM?

Using multiple specialized models allows businesses to extract valuable insights from specific tasks like data analysis, sentiment analysis, and market research. Specialized models can provide more accurate results in their domain compared to a single, general-purpose LLM.

4. How do LLMs assist in overcoming language barriers and reaching customers in different languages?

LLMs can perform language translation and generate content in multiple languages, helping businesses overcome language barriers. This capability enables companies to engage with a global audience by creating social media posts, product descriptions, and news articles tailored to various languages and cultures.

5. What future trends in LLMs should businesses be aware of?

Future trends include more efficient AI systems that seamlessly integrate LLMs into existing workflows, advancements in text summarization, and enhanced abilities to analyze social media data. These developments will help businesses extract valuable insights, improve software development processes, and stay ahead in competitive markets.

Map Your AI Roadmap to ROI

The AI Roadmap & ROI Workshop prioritizes your business’s highest-impact AI opportunities with a clear, ROI-driven roadmap.